Table of Contents

Summarize and analyze this article with

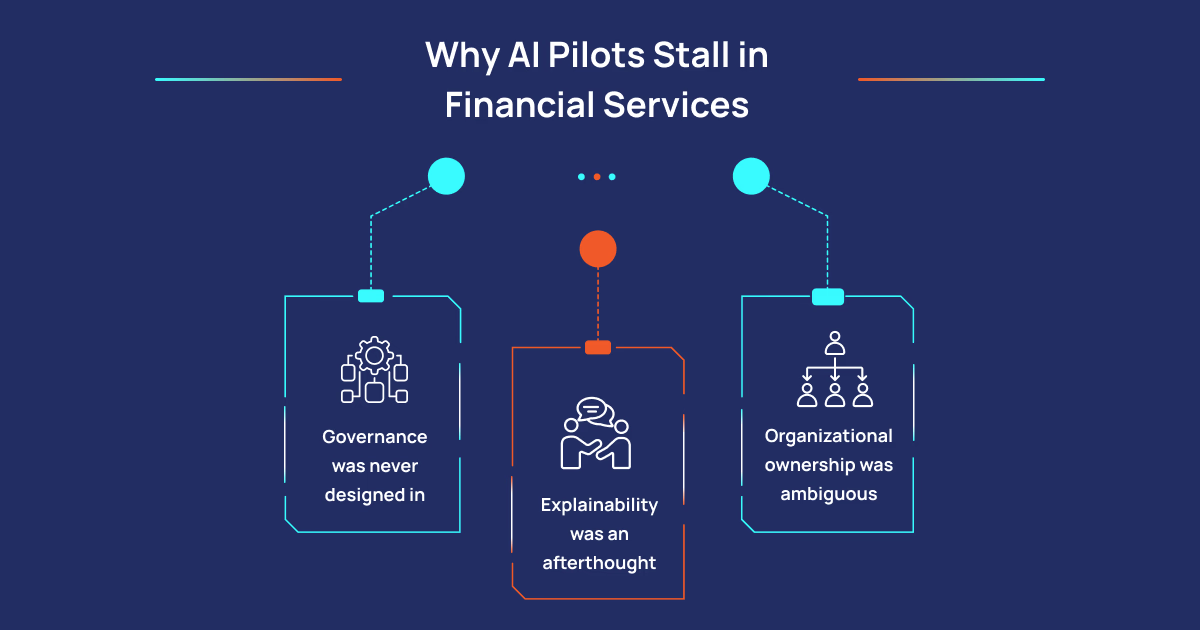

Why AI Pilots Stall in Financial Services

Governance Was Never Designed In

Explainability Was an Afterthought

Organizational Ownership Was Ambiguous

How PiTech Closes the Gap Between AI Pilot and Production

PiTech’s Financial Services AI practice is built around a governance-first delivery model — the compliance architecture before the model, not the compliance review after it. Our financial services AI engagements start with the regulatory reality: mapping applicable OCC, FDIC, Federal Reserve, CFPB, and state-level AI requirements, defining the explainability requirements, designing the audit trail architecture, specifying the human oversight model, and establishing the model validation framework before technical solution development begins.

This is a fundamentally different approach from most technology firms, which treat governance as a compliance checkpoint late in the delivery cycle. For regulated financial institutions where governance gaps at production scale create enforcement exposure, building governance in from the beginning is not optional — it is the delivery methodology that determines whether the system is deployable.

Our Credit and Lending practice designs AI-assisted underwriting and automated decisioning systems with adverse action documentation frameworks built into the architecture — satisfying ECOA, FCRA, and fair lending requirements as a design output rather than a compliance retrofit. Our Fraud and AML practice builds pattern detection and anomaly identification systems with compliance documentation integrated into the workflow, not appended afterward. Our Risk Management practice delivers AI-assisted credit, market, and operational risk modeling that meets OCC SR 11-7 model risk management expectations, with independent validation support and ongoing performance monitoring frameworks built in.

PiTech holds CMMI certification, ISO 27001 certification, and ISO 9001 certification. For financial institution clients, these are not marketing credentials — they are evidence that our delivery processes are documented, measured, and consistently applied. The governance documentation we produce in engagements is the output of a delivery process that runs the same way every time, not a deliverable that gets filed and forgotten. When an OCC examiner asks to review model development methodology documentation, the answer is an organized set of consistently-produced artifacts, not a scramble.

Our team includes practitioners who have built systems inside federal agencies, defense contractors, and heavily regulated commercial institutions under FISMA, FedRAMP, NIST 800-53, and sector-specific financial regulatory frameworks simultaneously. That background shapes how we approach commercial financial services engagements in ways that matter when the compliance stakes are real — because we understand what it means to actually operate under those requirements, not just to advise on them from the outside.

What Financial Institutions Should Do Right Now

The 14 percent of organizations that have successfully scaled AI share a trait worth examining: they put governance architecture on the table before starting the pilot, not after it produces results they want to productize. They treat AI governance as an engineering requirement, not a documentation exercise. And they lean on proven process frameworks — CMMI, ISO 42001, NIST AI RMF — as genuine operating infrastructure, not box-checking. Organizations that have been running AI pilots without that foundation have a clear diagnosis: the technology is working, and the delivery model is not. That is a solvable problem, but it requires the right partner.