HIPAA has anchored healthcare data compliance for over two decades. Every audit starts with it. Every vendor references it in their Business Associate Agreements. Every compliance officer knows its rules. But in 2026, HIPAA alone no longer adequately describes the compliance environment for healthcare AI. The Office for Civil Rights issued more AI-related guidance in 2025 than in the previous five years combined. Enforcement actions specifically targeting AI rose 340 percent in that period. And state-level AI legislation that took effect January 1, 2026 — much of which applies to healthcare organizations regardless of federal guidance — has created a compliance stack that most health systems are not yet built to manage.

For mid-size health systems, this has caught compliance teams mid-stride. They are managing AI pilots approved under assumptions that no longer hold. The organizations that navigate this period successfully are not the ones waiting for regulatory clarity. They are the ones that understand what compliance-ready AI deployment actually requires — and have the governance infrastructure already in place.

Why HIPAA Is No Longer Enough

At the federal level, HIPAA’s Privacy and Security Rules still govern how Protected Health Information is collected, stored, used, and disclosed. What has changed is how those rules apply when PHI flows through AI systems — training data pipelines, inference engines,

clinical decision support tools, and ambient documentation systems. The BAA covering your EHR vendor does not automatically cover the AI layer on top of it. Many organizations have not mapped that gap.

Texas’s Responsible AI Governance Act, effective January 1, 2026, establishes governance and disclosure requirements for AI systems operating in the state — including healthcare. California’s AB 489 prohibits AI from implying it holds a healthcare license. Colorado’s AI Act adds transparency and risk management requirements. OCR is preparing mandatory AI Impact Assessments. For multi-state health systems, the compliance matrix now looks nothing like the single-framework HIPAA world they were built for.

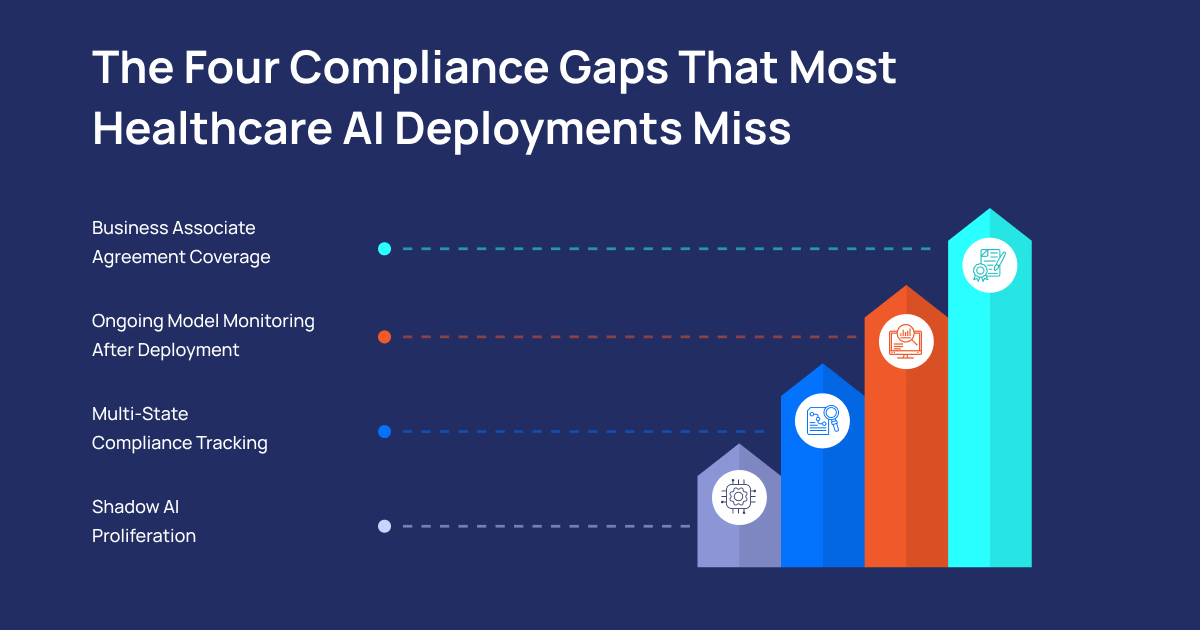

The Four Compliance Gaps That Most Healthcare AI Deployments Miss

Business Associate Agreement Coverage

Most health systems have solid BAA coverage for their EHR vendors and established SaaS platforms. But the AI layer on top of those systems — ambient documentation tools, clinical decision support applications, AI-assisted coding platforms — is often not covered with the specificity today’s environment requires. Who owns the model? Where is training data stored? What happens to PHI in the training pipeline? How are model updates validated before deployment? These questions need documented answers in the vendor relationship, not operating as assumptions.

Ongoing Model Monitoring After Deployment

Clinical AI systems degrade differently from traditional software. Models drift as patient populations change, documentation practices evolve, and real-world case distributions diverge from training data. A monitoring protocol designed for IT system uptime will not catch AI-specific degradation. One AI-assisted coding tool introduced a systematic error in ICD-10 categorization that affected claims accuracy for months before anyone detected it — because the monitoring architecture was designed for availability, not clinical accuracy drift. Health systems need purpose-built AI monitoring, not adapted IT operations tooling.

Multi-State Compliance Tracking

State AI laws vary in their disclosure requirements, risk assessment mandates, and accountability structures. Managing these as a portfolio — with clear ownership, systematic tracking, and regular review as legislation evolves — is a new operational requirement that most compliance functions have not operationalized. Organizations treating this as a legal research exercise rather than an ongoing compliance program will discover the gaps at the worst possible moment.

Shadow AI Proliferation

Sixty-six percent of US physicians actively use AI tools. Only 23 percent of health systems have BAAs in place for their third-party AI solutions. The gap between clinical adoption and organizational governance is not a data point — it is an audit finding waiting to happen. Clinicians adopt AI because it works and because formal governance processes are too slow. Closing the gap requires both technical controls and a governance process that operates faster than the workaround.

How PiTech Helps Healthcare Organizations Navigate This

PiTech’s Healthcare IT and Compliance Modernization practice is built specifically for organizations managing this complexity. Our approach starts where most technology vendors stop — at the governance architecture — and builds compliance into the AI deployment rather than retrofitting it afterward.

We begin with

Shadow AI Discovery: a comprehensive inventory of every AI tool in use across the organization, including tools adopted by individual clinicians and departments without IT review. Most health systems discover significant surprises in this exercise. The inventory becomes the foundation for every subsequent governance action. From there, our BAA Remediation service evaluates existing agreements against current regulatory requirements, identifies gaps, executes updated agreements with AI-specific provisions covering training data, model updates, geographic data residency, and deletion, and recommends tool retirement where gaps cannot be adequately closed.

Our

AI Impact Assessment framework — aligned with OCR’s expected forthcoming guidance — produces the formal pre-deployment documentation that regulators are looking for: what PHI the system touches, how it affects clinical decisions, what bias testing was performed, what human oversight is required, and who is accountable for ongoing performance monitoring. We implement HIPAA-Aligned AI Architecture that designs data pipelines meeting Security Rule requirements from ingestion through model output, including the expanded requirements of the 2026 Security Rule overhaul.

For multi-state health systems, our

Multi-State Compliance Protocol builds disclosure, transparency, and risk assessment processes that satisfy the most demanding requirements across all states of operation — so you maintain one governance framework, not a separate process for each jurisdiction. Our Continuous Monitoring service establishes ongoing oversight for both AI model performance and compliance posture, because OCR’s enforcement posture makes point-in-time assessments an inadequate approach.

PiTech holds ISO 27001 and ISO 9001 certifications and operates under CMMI-certified delivery processes. These are the credentials healthcare procurement committees look for when evaluating technology partners for programs where compliance failure has patient safety implications. We apply the same security rigor used in federal health agency engagements to commercial healthcare clients — because the stakes in clinical AI governance are not lower than in government work.

What Compliance-Ready AI Deployment Looks Like

Healthcare organizations that handle this well treat AI governance as an architectural discipline rather than a documentation exercise. They run formal AI Impact Assessments before deployment. They build monitoring that tracks clinical accuracy and fairness metrics alongside system uptime. They maintain vendor accountability beyond the BAA through audit rights and model change notification provisions. And they operate multi-state compliance as a portfolio management function with clear ownership, not as a reactive legal exercise.

The question every compliance officer should ask right now is simple: if OCR audited your most recently deployed AI system tomorrow, what could you show them? The answer to that question defines the compliance work that needs to happen now, not after the next enforcement action. HIPAA is still the floor. But in 2026, that floor is higher, the ceiling is moving, and the organizations that come through this period intact are the ones who treat AI compliance as an operational discipline — not a procurement checkbox.