Introduction

Banks are accelerating artificial intelligence adoption across credit scoring, fraud detection, and customer service. Yet growth brings scrutiny. Strong AI risk management banking practices are now essential to satisfy regulators, protect customers, and sustain trust. Institutions that embed banking AI compliance and transparent governance into every model lifecycle are better positioned to scale innovation without penalties. This guide explains how AI model risk management, explainable AI banking, and structured AI governance banking frameworks help financial institutions stay compliant while unlocking measurable value.

Why AI Risk Management Is Now a Regulatory Priority

Supervisory bodies increasingly expect formal AI regulatory reporting banks processes, documented validation, and clear accountability across AI lifecycles. Many institutions still rely on fragmented controls, creating significant operational and compliance exposure.

Global AI Regulations Reshaping Banking Compliance

AI governance in financial services is rapidly shifting from internal policy to enforceable regulation. New global rules classify several banking use cases as high risk, requiring transparency, human oversight, and continuous monitoring. Resilience regulations are also increasing scrutiny on third-party AI model risk and technology dependencies.

In the United States, supervisory guidance is evolving beyond traditional AI model risk management toward full lifecycle accountability. This shift makes explainable AI banking, automated AI regulatory reporting in banks, and enterprise-wide AI governance banking critical to achieving sustained AI risk management banking and dependable banking AI compliance.

Banks that align early with these regulatory expectations can reduce remediation exposure, accelerate approval timelines, and build stronger long-term supervisory trust.

Rising Model Complexity and Compliance Pressure

Modern machine learning introduces opaque decision paths, vendor dependencies, and continuous learning behaviour. Without disciplined banking model risk management, explainability gaps and unmanaged drift can trigger supervisory findings or financial penalties.

Compliance as the Biggest Adoption Barrier

Industry evidence shows compliance concerns slow AI deployment more than technical limitations. Stronger AI risk controls help banks scale AI safely and gain regulatory trust, turning compliance into a business advantage instead of a barrier.

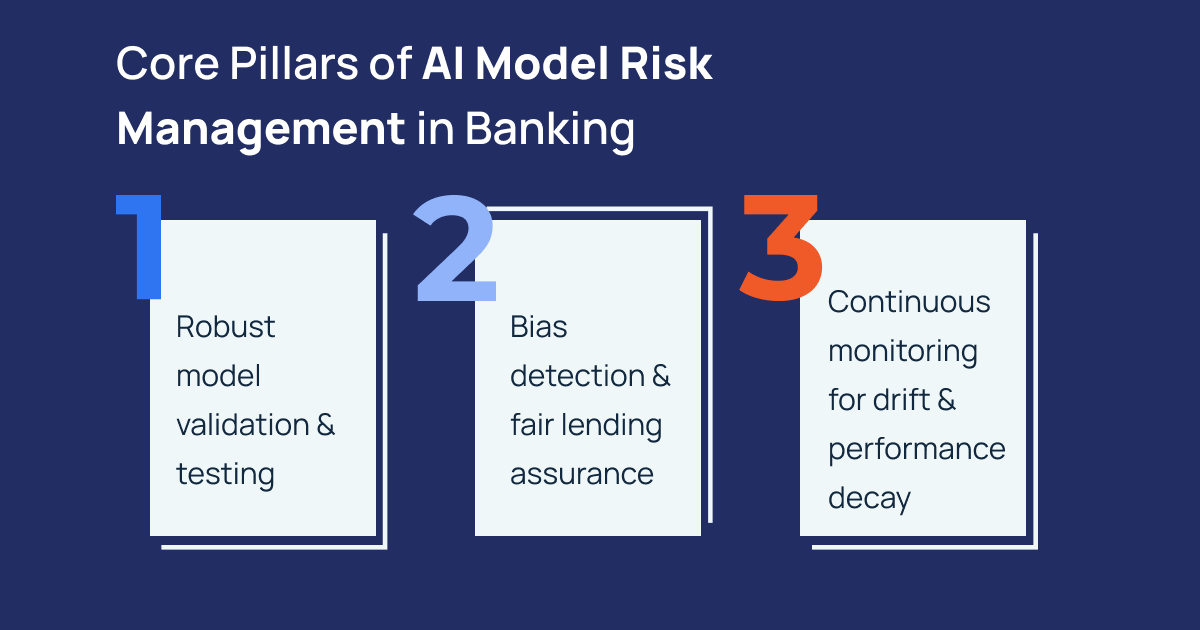

Core Pillars of AI Model Risk Management in Banking

Effective AI model risk management aligns governance, validation, monitoring, and documentation across the full lifecycle.

1. Robust model validation and testing

Independent AI model validation banking ensures conceptual soundness, performance stability, and regulatory alignment before deployment. Advanced AI stress testing banking simulates adverse scenarios to uncover hidden weaknesses.

2. Bias detection and fair lending assurance

Historical data can encode discrimination, making fair lending AI bias testing essential. Automated fairness metrics and segmented performance analysis reduce legal and reputational exposure while supporting AI ethics banking regulations.

3. Continuous monitoring for drift and performance decay

Production monitoring must detect behavioural changes early. Mature AI governance banking programs integrate alerts, retraining triggers, and audit logs to maintain accuracy and compliance over time.

Explainable AI and Transparency Requirements

Regulators increasingly demand interpretable outcomes, especially for credit decisions.

Moving from black box to accountable AI

Adopting explainable AI banking techniques such as feature attribution, surrogate models, and decision traceability enables institutions to justify outcomes during audits and customer disputes.

Documentation and audit readiness

Structured AI regulatory reporting banks processes create defensible evidence for OCC, FDIC, and global supervisory reviews. Clear documentation also supports internal risk committees and board oversight.

Managing Third Party and GenAI Risks

Third party accountability expectations

Supervisors hold banks responsible for third-party AI model risk, even when solutions are outsourced. Strong due diligence, contractual controls, and ongoing monitoring are therefore mandatory.

Emerging GenAI compliance frameworks

New GenAI compliance frameworks address hallucination risk, data leakage, and uncontrolled outputs. Integrating these controls into enterprise AI governance banking prevents operational and reputational incidents.

Building a Scalable AI Governance Framework

Sustainable AI risk management banking requires centralized oversight and clear ownership.

Enterprise model inventory and lifecycle control

A unified registry enables consistent banking model risk management, validation tracking, and retirement governance. This visibility reduces audit failures and improves cross functional coordination.

Integration with RegTech and automation

Modern RegTech AI compliance solutions automate monitoring, reporting, and evidence collection. Automation reduces analyst workload while strengthening supervisory confidence.

Real World Compliance Failures and Lessons

Regulatory actions often stem from weak documentation, unmanaged drift, or insufficient fairness testing. Institutions lacking structured AI model risk management frequently face remediation orders, capital impacts, or reputational damage. Conversely, banks investing early in AI governance banking, and explainable AI banking demonstrate faster regulatory approval and safer innovation scaling.

Conclusion:

Artificial intelligence can transform banking efficiency, risk detection, and customer experience. However, sustainable value depends on disciplined AI model risk management, transparent explainable AI banking, and enterprise-wide AI governance banking. Institutions that operationalize banking AI compliance, automate AI regulatory reporting banks, and proactively control operational risk AI banking will innovate faster while satisfying regulators. In a landscape where compliance determines competitive advantage, mature AI risk management banking is the foundation for trustworthy and scalable digital banking.

Turn AI Compliance into a Competitive Advantage

Leading banks and several other financial institutions are moving beyond fragmented controls toward integrated AI governance and lifecycle risk management. PiTech enables this shift through regulatory-aligned frameworks, continuous monitoring, and enterprise-grade compliance automation.